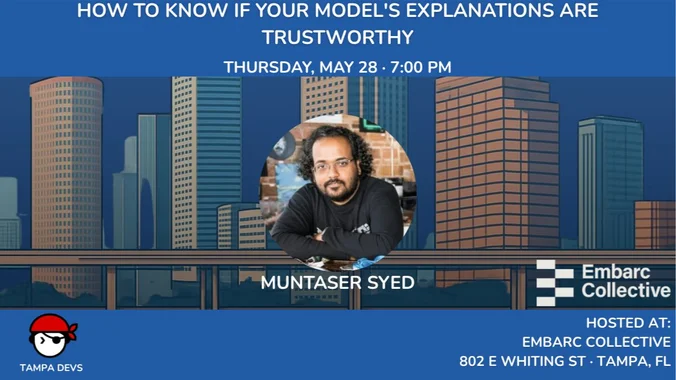

XAI Evaluation: How to Know If Your Model's Explanations Are Trustworthy

May

28

Thursday, May 28, 2026

11:00 PM UTC

17attending

Location

Embarc Collective

About this event

Popular explainability methods like SHAP, LIME, and Integrated Gradients often yield conflicting results for the same model. How do you decide which one to trust? This hands-on workshop moves beyond "visual intuition" to introduce quantitative evaluation of XAI, providing objective tools to measure explanation quality.

Working through three interactive Google Colab notebooks, participants will:

- Identify Disagreement: Generate multiple explanations on real datasets to see exactly where and why they diverge.

- Apply Metric Frameworks: Measure explanations across three critical dimensions: Faithfulness (accuracy to the model), Robustness (stability), and Complexity (interpretability).

- Build Evaluation Pipelines: Create comparison tables and learn a decision framework for choosing explainers based on use cases like regulatory audits or stakeholder communication.